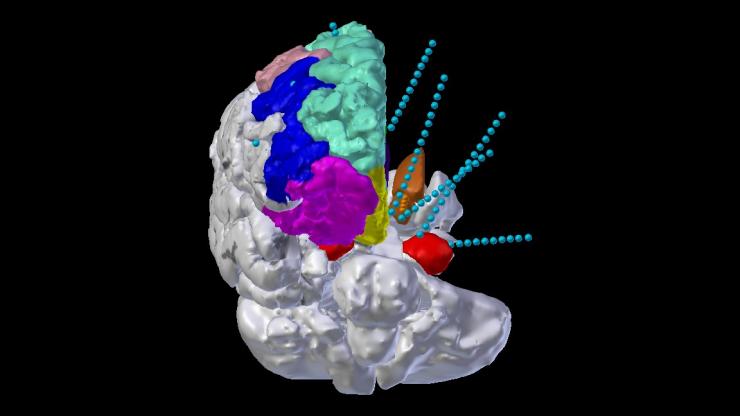

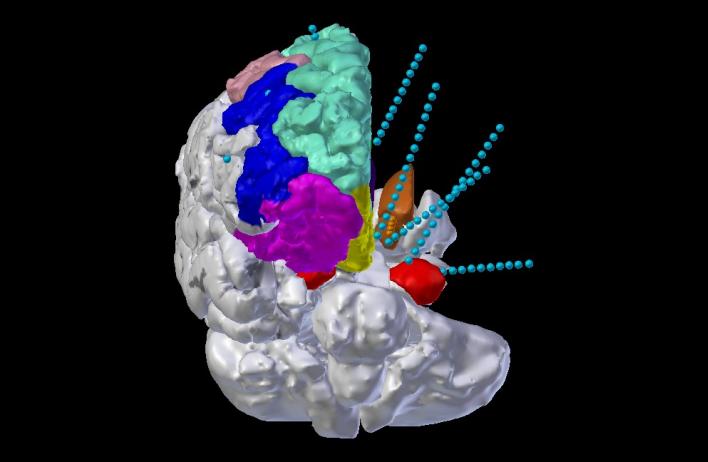

Developing novel targets for neural stimulation therapies: We apply a variety of stimulation-based research paradigms in patients with epilepsy and depression undergoing intracranial monitoring, with the goals of mapping network dynamics underlying emotional phenomena and modulating them in a targeted way using electrical neurostimulation. Of particular interest for stimulation, we study the cingulum bundle, the cingulate cortex, the amygdala, and the insula, among other emotionally-active structures.

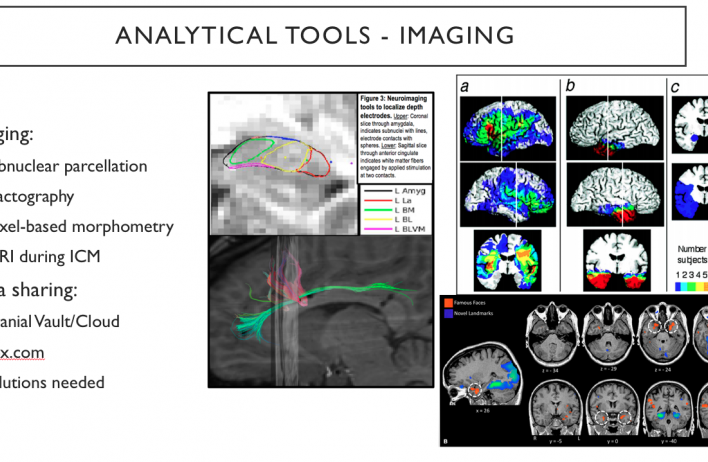

Advanced surgical neuroimaging: We use cutting-edge neuroimaging techniques to visualize and quantify the neural networks involved in affect in our human participants, and to integrate this spatial information with electrophysiological signatures activated during particular processes or by stimulation. We use advanced magnetic resonance imaging techniques to resolve in particular the white matter fibers connecting affective network regions, and use this information to inform neurosurgical targeting, and to further explore the constructs of structural, functional, and effective neural connectivity.

Affective electrophysiology: We are examining the electrophysiological basis of mood (otherwise known as affect), using the platform of intracranial neural recordings. We are developing novel methods of identifying electrophysiological features of interest, exploring both the time and frequency domains of neural transmission.

Affective bias: We have developed a novel task to examine the property of affective bias. Affective bias refers to an innate human phenomenon in which one’s own emotional state tends to color the interpretation of external stimuli. This effect is especially pronounced in the evaluation of emotional faces; we use affective bias as a cognitive proxy of emotional state, allowing rapid insights into stimulation-induced changes in mood that don’t depend on a patient’s self-report.

Pulse-Evoked Potentials (PEP): We leverage sensitive research-grade neuroimaging to characterize the structural connectivity between neural network regions, using technology called diffusion-weighted neuroimaging, and the analytical processes of probabilistic and deterministic tractography. These data can be analyzed in tandem with electrophysiological indices of effective connectivity – namely, measuring evoked potentials in putatively-connected brain regions following the application of single pulses of electrical stimulation at a hub or connector region to characterize the latency and magnitude of transmission between regions. This experiment is currently underway in patients with treatment-resistant depression and those with epilepsy.

Autonomic Surveillance: We further characterize the function of mood-relevant brain regions by monitoring the activity of the autonomic nervous system. The extent to which a person’s heart rate increases or their palms sweat is measurably related to their current mood state; we rigorously quantify these variables during emotional neuroscience tasks, as well as during different types of neurostimulation.

Facial Motor Analysis: Through collaboration with our close colleagues at the University of Pittsburgh and Carnegie-Mellon University, we use the facial affect coding system and its computer-vision application “AFAR” to track and quantify changes in facial action units underlying emotional expressions. These data provide an entry point to examine the relationship between facial expressions and applied stimulation paradigms, as well as possibilities of dissociation between facial expression and internal mood, and changes in gestural mimicry and empathy.

Translating electrophysiology to stimulation: We are working in a unique patient population (UH3-NS103549), which allows us to explore analytical methods to translate between electrophysiological components of neural responses to stimulation, and layer these responses together to ideally reflect the neural signature of euthymic or non-depressed mood.

Auditory Oddball: The oddball paradigm is classically utilized to examine salience processing, or the ability to identify and orient towards important environmental stimuli. The task itself consists of the repeated presentation of the same ‘standard’ stimulus, randomly interleaved with a different ‘oddball’ stimulus, where the oddball stimulus is known to elicit distinct physiological responses in both the central and peripheral nervous system. Using simple auditory sounds combined with neural recordings, pupillometry, and peripheral nervous system tracking, we are able to elicit salience responses to examine how this essential sensory processing mechanism might be altered in mood disorders such as depression.