Baylor College of Medicine – Department of Psychiatry

Chadi G. Abdallah has expertise in antidepressants clinical trials, translational clinical neuroscience, multimodal neuroimaging, and the development of rapid acting antidepressant for the treatment of depression, PTSD and other stress-related psychiatric disorders.

Abdallah, C. G., Sanacora, G., Duman, R. S., & Krystal, J. H. (2015). Ketamine and rapid-acting antidepressants: a window into a new neurobiology for mood disorder therapeutics. Annual review of medicine, 66, 509–523. https://doi.org/10.1146/annurev-med-053013-062946.

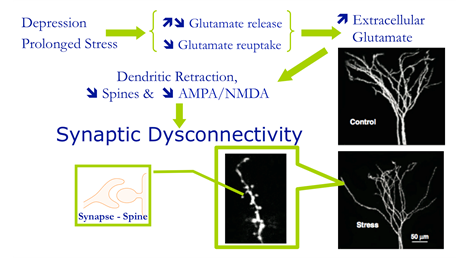

Ketamine is the prototype for a new generation of glutamate-based antidepressants that rapidly alleviate depression within hours of treatment. Over the past decade, there has been replicated evidence demonstrating the rapid and potent antidepressant effects of ketamine in treatment-resistant depression. Moreover, preclinical and biomarker studies have begun to elucidate the mechanism underlying the rapid antidepressant effects of ketamine, offering a new window into the biology of depression and identifying a plethora of potential treatment targets. This article discusses the efficacy, safety, and tolerability of ketamine, summarizes the neurobiology of depression, reviews the mechanisms underlying the rapid antidepressant effects of ketamine, and discusses the prospects for next-generation rapid-acting antidepressants.

UT Health Professor – Department of Physical Medicine & Rehabilitation

Tatiana Schnur’s lab studies stroke patients in the acute stages. They use CAMRI imaging to determine the size and location of the lesions.

Junhua Ding, Randi C Martin, A Cris Hamilton, Tatiana T Schnur, Dissociation between frontal and temporal-parietal contributions to connected speech in acute stroke, Brain, Volume 143, Issue 3, March 2020, Pages 862–876,

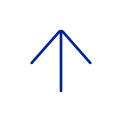

Humans are uniquely able to retrieve and combine words into syntactic structure to produce connected speech. Previous identification of focal brain regions necessary for production focused primarily on associations with the content produced by speakers with chronic stroke, where function may have shifted to other regions after reorganization occurred. Here, we relate patterns of brain damage with deficits to the content and structure of spontaneous connected speech in 52 speakers during the acute stage of a left hemisphere stroke. Multivariate lesion behavior mapping demonstrated that damage to temporal-parietal regions impacted the ability to retrieve words and produce them within increasingly complex combinations. Damage primarily to inferior frontal cortex affected the production of syntactically accurate structure. In contrast to previous work, functional-anatomical dissociations did not depend on lesion size likely because acute lesions were smaller than typically found in chronic stroke. These results are consistent with predictions from theoretical models based primarily on evidence from language comprehension and highlight the importance of investigating individual differences in brain-language relationships in speakers with acute stroke.

Baylor College of Medicine – Department of Neuroscience

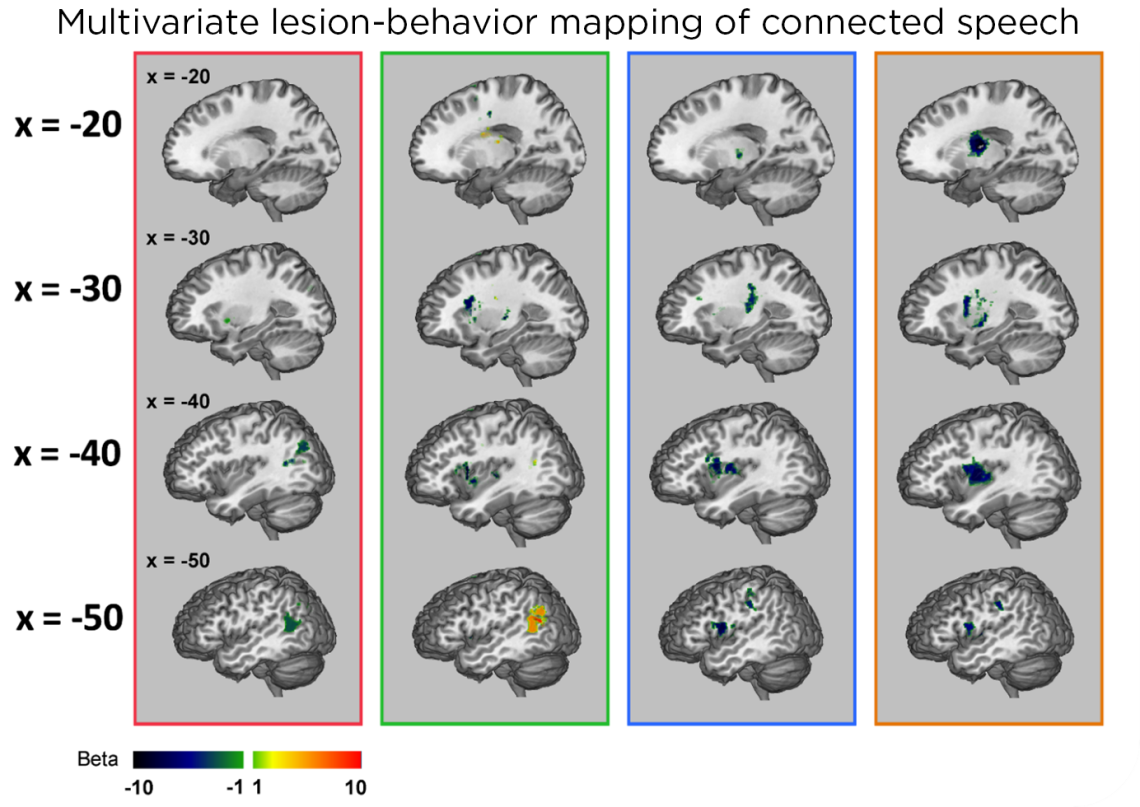

The Yau lab uses functional MRI (fMRI) to characterize brain responses to tactile vibration.

(Preprint) Lingyan Wang, Jeffrey M Yau, Signatures of vibration frequency tuning in the human neocortex. doi: https://doi.org/10.1101/2021.10.03.462923

The spectral content of vibrations produced in the skin conveys essential information about textures and underlies sensing through hand-held tools. Humans can perceive and discriminate vibration frequency, yet the central representation of this fundamental feature is unknown. Using fMRI, we discovered that cortical responses are tuned for vibration frequency. Voxel tuning was biased in a manner that reflects perceptual sensitivity and the response profile of the Pacinian afferent system. These results imply the existence of tuned populations that may encode naturalistic vibrations according to their constituent spectra.

Baylor College of Medicine & Menninger Clinic

McNair Initiative for Neuroscience Discovery

Dr. Ramiro Salas’ research focuses on human brain imaging, specifically to study drug addiction, depression, anxiety, autism, and other brain-related problems. Dr. Salas laboratory strives to find relationships between brain imaging parameters, genetic and clinical data to advance toward improved psychiatric diagnostics and personalized treatment.

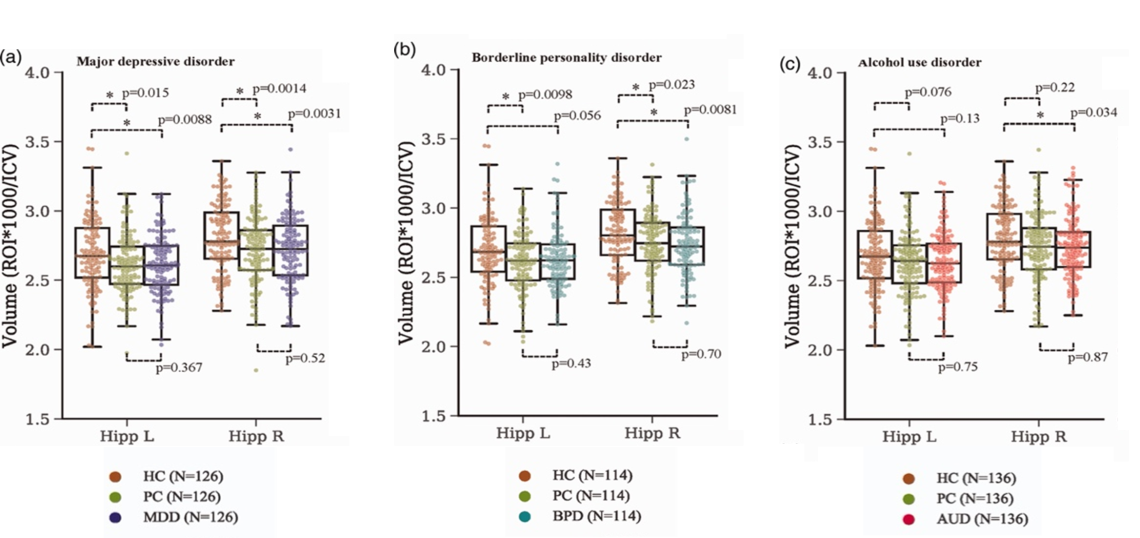

Gosnell SN, Meyer MJ, Jennings C, Ramirez D, Schmidt J, Oldham J, Salas R. Hippocampal Volume in Psychiatric Diagnoses: Should Psychiatry Biomarker Research Account for Comorbidities? Chronic Stress. 2020 4:1-10.

Background: Many research papers claim that patients with specific psychiatric disorders (major depressive disorder, posttraumatic stress disorder, borderline personality disorder, alcohol use disorder, and others) have smaller hippocampi, but most of those reports compared patients to healthy controls. We hypothesized that if psychiatrically matched controls (psychiatric control, matched for demographics and psychiatric comorbidities) were used, much of the biomarker literature in psychiatric research would not replicate. We used hippocampus and amygdala volume only as examples, as these are very commonly replicated results in psychiatry biomarker research. We propose that psychiatry biomarker research could benefit from using psychiatric controls, as the use of healthy controls results in data that are not disorder-specific.

Method: Hippocampus/amygdala volumes were compared between major depressive disorder, sex-/age-/race-matched healthy control, and psychiatric control (N = 126/group). Similar comparisons were performed for posttraumatic stress disorder (N = 67), borderline personality disorder (N = 111), and alcohol use disorder (N = 136).

Results: Major depressive disorder patients had smaller left (p = 8.79 × 10−3) and right (p = 3.13 × 10−3) hippocampal volumes than healthy control. Posttraumatic stress disorder had smaller left (p = 0.018) and right (p = 8.64 × 10−4) hippocampi than healthy control. Borderline personality disorder had smaller right hippocampus (p = 7.90 × 10−3) and amygdala (p = 1.49 × 10−3) than healthy control. Alcohol use disorder had smaller right hippocampus (p = 0.034) and amygdala (p = .024) than healthy control. No differences were found between any of the four diagnostic groups and psychiatric control.

Conclusion: When psychiatric controls were used, there was no difference in hippocampal or amygdalar volume between any of the diagnoses studied and controls. This strategy (keeping all possible relevant variables matched between experimental groups) has been used to advance science for hundreds of years, and we propose should also be used in biomarker psychiatry research.

Thomas S. Trammell Research Professorship in Child Psychiatry

Baylor College of Medicine

Psychiatry & Behavioral Sciences Professor

Baylor College of Medicine

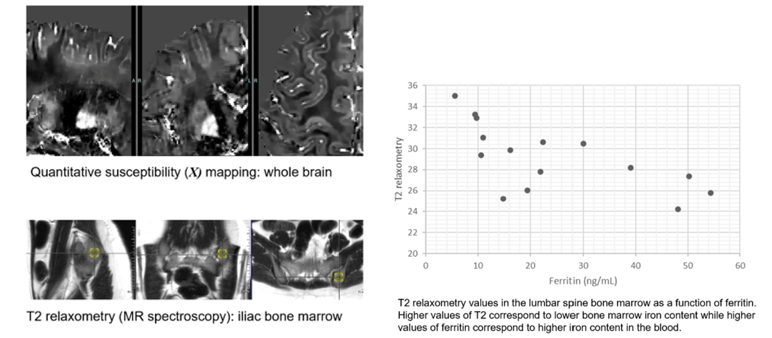

Dr. Calarge examines how body iron stores may affect brain iron level and if this relates to brain development and function. If iron supplementation can increase brain iron levels, it may improve psychiatric symptoms.

Quantitative Susceptibility Mapping: QSM is a cutting-edge MRI technique that maps tissue to detect and quantify iron, calcium, and myelin. These influence the brain’s magnetic environment and are implicated in aging, neurodegeneration, and psychiatry.

This study uses QSM to detail distribution of iron in the brain in adolescents with and without iron deficiency and MR spectroscopy to estimate iron content in the iliac and lumbar spine bone marrow in a safe and non-invasive way.

Assistant Professor

Menninger Psychiatry and Behavioral Sciences

Baylor College of Medicine

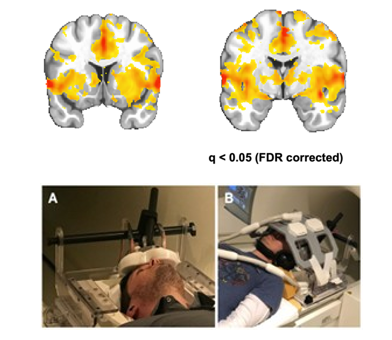

Dr. Oh and colleagues are currently collecting fMRI data using a state-of-the-art approach of employing interleaved TMS-fMRI as a non-invasive in vivo method for probing functional connectivity and assessing the effects of TMS in subcortical brain regions. This technique has the potential to advance fMRI research beyond traditional association studies and into the realm of causal circuit manipulation. Interleaved TMS-fMRI approach allows us to investigate the mechanisms by which TMS induces functional activation across brain networks by capturing dynamic neural changes. In the abstract titled “TMS direct effects of orbitofrontal cortex stimulation – An interleaved TMS-fMRI study,” presented at the 2024 Organization of Human Brain Mapping (OHBM) conference, we demonstrated that single TMS pulses to the left orbitofrontal cortex (OFC) can evoke brain responses in distinct brain regions, including the bilateral caudate, putamen, amygdala, anterior cingulate cortex, and precuneus (see Figure). These findings suggest that the left OFC may serve as a promising target for TMS interventions in psychiatric disorders, particularly substance use disorder, through modulation of OFC- reward circuitry.

Figure from Oh H, Myerson J, Salas R. TMS direct effects of orbitofrontal cortex stimulation – An interleaved TMS-fMRI study. OHBM 2024

Assistant Professor, Department of Integrative Biology & Physiology, UCLA

Adjunct Assistant Professor, Department of Psychological Sciences, Rice University

Director of the Neuroscience of Memory, Mood, and Aging Lab

Rice University- Neuroscience of Memory, Mood & Aging Lab

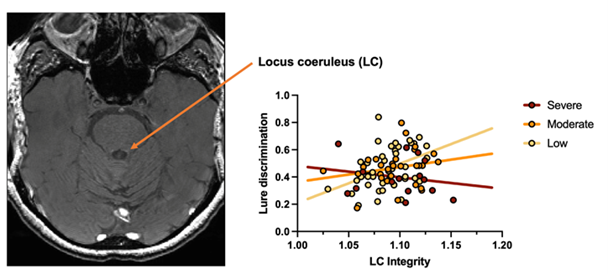

Drs. Lorena Ferguson and Stephanie Leal collected MRI images on older adults with focus on the locus coeruleus. The locus coeruleus (LC) is a small brainstem structure that is the primary source of norepinephrine (NE) to the brain and is essential for learning, arousal, and emotional memory. Higher LC integrity in late life is typically considered to be neuroprotective. However, emerging work has found that high levels of neuropsychiatric symptoms in late life are associated with higher LC integrity in older adults (OAs) with high tau burden, a primary biomarker of Alzheimer’s disease (AD). Here, they assessed how transdiagnostic neuropsychiatric symptom profiles in OAs were associated with LC integrity and memory performance.

Dr. Ferguson administered an emotional memory task to cognitively healthy OAs (N = 87, Age M = 67.7). LC integrity was assessed with a 3T MRI structural neuromelanin-sensitive MT-weighted sequence. They utilized K-means clustering of depression and anxiety symptoms, which resulted in three clusters: severe (N = 15), moderate (N = 29), and low (N = 43) levels of neuropsychiatric symptoms (Figure below).

Group differences in LC integrity were found, in which OAs with severe and moderate symptoms showed higher LC integrity relative to those with low symptoms. They also found that higher LC integrity was associated with better memory performance in those with low symptoms, but worse memory in those with severe symptoms.

While greater LC integrity is generally thought to be beneficial, this may not be the case for those experiencing significant mental health symptoms. This is supported by our finding that higher LC integrity in those with severe symptoms was associated with worse memory. This suggests that greater LC integrity in these groups may be detrimental and potentially indicative of higher risk of AD.

McGovern Medical School- Department of Pediatrics

Associate Professor

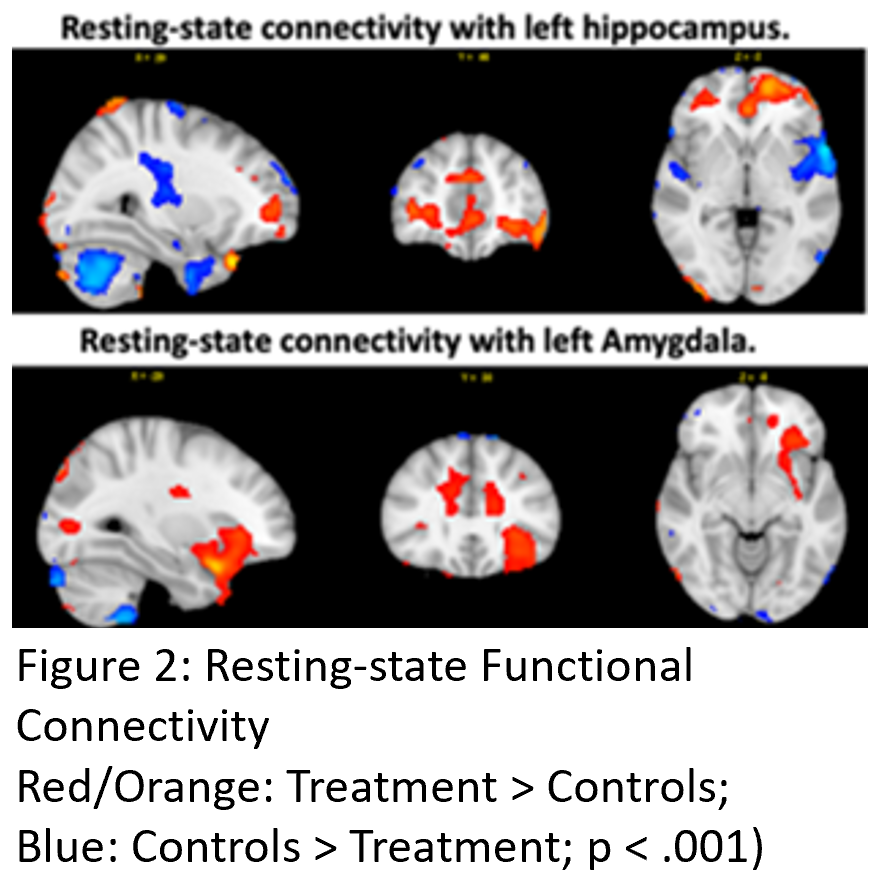

Dr. Dana DeMaster's research investigates how parental behavior shapes the development of frontal-limbic neural networks, influencing early emotion regulation and cognitive outcomes.

With over a decade of experience in developmental neuroimaging at CAMRI, she has led the collection of structural and functional fMRI data from toddlers during natural sleep. Her most recent work reveals strong links between highly responsive parenting and typical maturation of frontal brain circuits tied to cognition and self-regulation in preterm toddlers (Figure 1). These results hold key implications for supporting executive function skills in high-risk children (see Muñoz et al., 2024). Dr. DeMaster along side Dr. Johanna Bick co-lead an NIH-funded study (NICHD 5R01HD100560), investigating parenting interventions aimed at improving brain development trajectories and reducing cognitive and socioemotional risks in preterm infants and toddlers. Preliminary data suggest that early positive parenting interventions improve functional brain connectivity (Figure. 2).

Credit

Credit